Imagine the surprise of an obscure septuagenarian blogger in discovering that the New York Times is writing about his latest subject — MBTI — and getting it wrong.

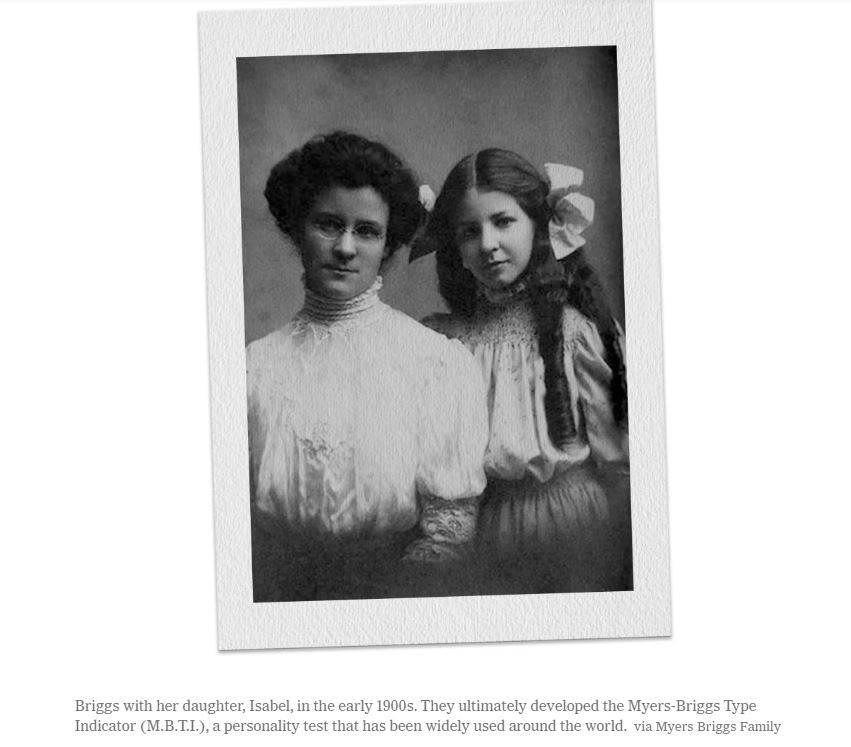

See Overlooked No More: Katharine Briggs and Isabel Myers, Creators of a Personality Test

The ‘getting it wrong’ part did not supply the surprise; when it comes to coverage of testing and assessment, the Gray Lady carries a grudge in her handbag; e.g., e.g, Robert Schaefer and his basically one man organization FairTest with an acknowledged hatred of any sort of educational testing is quoted over 99 times when everyone knows his only purpose is to criticize and block educational measurement.

My surprise did not arise from the failure of the NYT to acknowledge more fully the flaws of MBTI that were covered here and here in this blog. They admit only in passing that “The enduring popularity of the Myers-Briggs test is rooted not in science — personality tests are notoriously poor predictors of behavior…” They could have added about the MBTI as Howard Esbin did here did that “…[gender] bias still exists today, as reported by the University of Dayton’s Women Center in 2017: ‘Yes, gender plays a role in determining personality type through MBTI . . . According to data collected by MBTI, the only significant difference between genders occurs within the Thinking-Feeling dichotomy. The majority of women (roughly 75%) fall into the feelers.”

They chose instead mostly to focus on the popularity of the test. And it’s an obituary, which does make these lapses perhaps less critical even the insistence of one of its authors to tell us what his type is at the end of the article. No, what is most egregious about the New York Times piece is the way in which it seems to suggest that Educational Testing Service supported after research analysis the Myers-Briggs:

“Myers kept going nonetheless, and in 1956 she started working with Henry Chauncey, the president of the Educational Testing Service in Princeton, to publish the M.B.T.I. The tool posited that the four dimensions of personality produced a total of 16 possible types, all noted with initials.”

The next sentence should have been ‘but ETS researchers judged her tool to be without validity or reliability and she was required to leave the testing giant’s Princeton campus.’ They could have mentioned my friend and former colleague Larry Stricker who as noted here found that “Samples of thousands of high school students and college freshmen didn’t show evidence of bimodality (e.g. people showing a clear preference for, say, thinking over feeling), with most people hovering in the middle. A recent graduate from New York University’s doctoral program in statistics, Lawrence Stricker, came on board and dismantled the system, arguing that the questionnaire items didn’t reflect the underlying theory. “ But they didn’t.

(BTW, has anyone else ever noticed that the favorite word of NYT headline writers is ‘BUT’? If you want to get a taste of some lovely satire of their tendency towards what about-ism and both sider-ism, try out New York Times pitchbot.)

Okay, enough antipathy towards MBTI and the NYT. Let’s head in a more positive direction and get back to our goal of No Tests but for Learning even personality tests.

Lest misimpressions arise for readers of this series that pokes holes in some of the most popular personality tests, the intention here is not to dismiss all such assessments. Instruments can tell us not only about our personalities but apparently about whether we are telling the truth or not in some instances better than human beings. Consider this recent paper: How humans impair automated deception detection performance. The experiment in the paper showed that AI could predict better whether someone was telling the truth or not than a human being could. And if you added a human being to supplement the algorithm’s performance the chances of identifying liars decreased!

Apparently, human beings have a bias to believe the other person is telling the truth whereas such a bias can be eliminated in automated deception detection. This finding reminded me of research I read while writing my Master’s thesis 30 years ago that showed the administration of the Beck Depression Inventory by computer was actually more effective than by human beings, but prospective patients didn’t trust the computer was going to tell them the truth. ETS had this issue for years when people would reject essays graded by computers even though the grading of those essays according to the agreed-upon criteria was superior when done through machine learning. (Here is NYT again offering disproportionate voice to a single researcher at MIT in criticizing automated essay scoring.)

Maybe our reality is as Jack Nicholson’s character said in A Few Good Men, “you can’t handle the truth!” We must hope and work otherwise. Thus the interest here is to fight for tools that are not only valid, reliable, and fair but also allow the person being measured to learn from the experience or to have the experience be learning itself. Such an aspiration grows more difficult every day in an environment where validity and reliability face criticism, rejection, and ridicule in our culture.

The obituary of Bruno LaTour contained a revealing quotation that I came upon serendipitously while writing the segment

“Facts,” he told The Times Magazine, “remain robust only when they are supported by a common culture, by institutions that can be trusted, by a more or less decent public life, by more or less reliable media.”

If we don’t care about validity and reliability, if we no longer have a common culture in which those qualities matter, then we don’t care about facts. We need a culture that has some common interest in getting at the truth of every situation even if the situation is just who we are, what our personality attributes seem to be.

This is getting too long. There Will Have To Be a Part V. I know. I know. You’re welcome. Just another proof of my being innately “Confident, logical, critical and direct.” After all, I am an ENTJ— at least on some days.

Pingback: Myers-Briggs Antipathy: Maybe It’s Just My Personality Part V – Testing: A Personal History